At the end of May, Snap debuted the fourth generation Snap Spectacles, their latest salvo in the increasingly hot augmented reality platform war. Compared to the measured commercial launches of previous generations, this one felt a little…rushed. Almost like they wanted to make sure to preempt any announcements from elsewhere in the industry.

But who knows, it’s really a dev platform vs a consumer product launch after all. This latest edition is “not for sale” but available to creators who apply for a development unit. While they’ve been starting to show up in the wild, I don’t have my hands on one… yet (Snap, call me 👻🕶), so I wanted to take a drive down memory lane from the original Spectacles to today, with a special focus on a product teardown and curated content examples for the currently shipping Gen 3 Spectacles.

Snap is a fascinating company. Despite — or perhaps because of— a penchant for hyperbole, their work shows a depth of conceptual conviction rarely matched in the industry. I love the dedication to launching into the camera, the stubbornly hard-to-learn interface, their embrace of ephemerality, and the Spectacles product line. They clearly think deeply about emotional experiences and the evolving personal relationship between their users and content.

The evolutionary path of Spectacles is a case study in how a design-led company lays the foundation for a new product category through thoughtful iteration on interface design, engineering architecture, and content.

How it started

Back in 2016, Snapchat renamed itself Snap Inc, started calling themselves a “camera company”, and introduced their first piece of hardware: the Snap Spectacles. For $130, you could get a pair of shades that would record a video with the touch of a button and wirelessly sync with your phone for sharing. The styling was fun and on-trend.

You could only get them from drops of SnapBot, a vending machine deposited in secret locations at unannounced times. It was super fun, generated a bunch of buzz, and was one of my favorite product launches in recent times. Ah 2016…we were all so young, so bright-eyed.

From the Snap annual report: “We believe that reinventing the camera represents our greatest opportunity to improve the way that people live and communicate. We contribute to human progress by empowering people to express themselves, live in the moment, learn about the world, and have fun together.” Whew.

But things didn’t go especially well. Setting aside for a moment the social awkwardness of conspicuously pointing a face-mounted camera at everyone in your life, the biggest thing missing from the product experience IMO was the users themselves as video subjects. Point-of-view video isn’t always as compelling as an ego-projecting video of yourself on camera.

Mass-market success was notoriously limited: the company sold around 220,000 specs, ~50% of users churned after a month, and they took a $40M write-off with hundreds of thousands in inventory. Even though CEO Evan Spiegel described them as “a toy,” it was a toy the company put a lot of weight behind.

Onward

Many companies would have stopped dead in their tracks after the first version. Not Snap.

To understand their level of dedication, go back and listen to Evan describe his near spiritual experience in 2016 with an early prototype in Big Sur with his fiancee:

“It was our first vacation, and we went to Big Sur for a day or two. We were walking through the woods, stepping over logs, looking up at the beautiful trees. And when I got the footage back and watched it, I could see my own memory, through my own eyes — it was unbelievable. It’s one thing to see images of an experience you had, but it’s another thing to have an experience of the experience. It was the closest I’d ever come to feeling like I was there again.”

He’s chasing something experiential and fundamentally personal here. Spectacles aren’t designed to record videos, they’re designed to bottle experiences, to capture memories.

Spectacles aren’t designed to record videos, they’re designed to bottle experiences, to capture memories.

Snap soldiered on with a modest update in mid-2018: Spectacles v2. Priced at $150, they updated the frame design to be significantly more understated, made them waterproof, and alongside an upgrade in camera resolution, added the ability to snap still photos for the first time (no that wasn’t a feature of gen 1). Snap was more coy about sales numbers this time around but anecdotally I suspect they were not a wild success based on the number of people I saw wearing them (zero) and based on the number of hardware execs leaving the company (non-zero).

Spectacles 3…D

But they’re not done. Not by a long shot. This is where Snap takes a big leap forward.

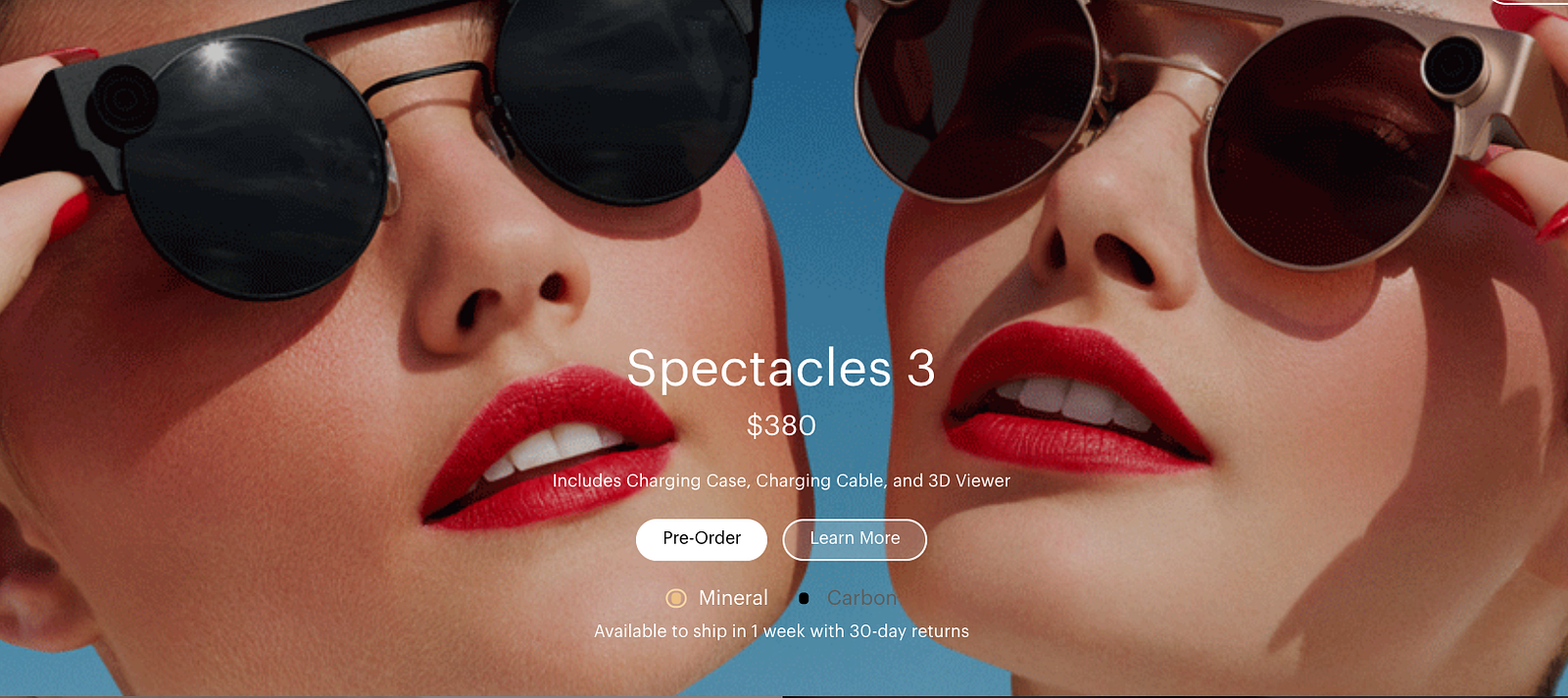

In November 2019, Snap announced the new Snap Spectacles 3 with the headline “Capture your world in 3D.” This generation featured a new design with metal frames and not one, but two cameras. It feels like we’re going through a razor-blade-style arms race with the number of cameras on mobile devices these days…the real question is always what do those cameras *do* for me?

The Spectacles’ two cameras allow the device to capture wide field-of-view video from both angles, reconstruct stereoscopic video, and build a 3D depth map of physical objects in front of the wearer. Users can create “3D selfies”, and with the extra depth channel in addition to RGB, use special filters to create effects that play off of the real-world positioning of objects. Snap launched Lens Studio to introduce tools for users to make AR filters, and by mid 2020, more than 735,000 Lenses had been created.

Back to Speigel: “What’s really exciting about this version is that, because V3 has depth, we’re starting to actually understand the world around you,” he said. “So those augmented reality effects are not just a 2D layer. It actually integrates computing into the world around you. And that is where, to me, the real turning point is.”

“I do think this is the first time that we’ve brought all the pieces of our business together, and really shown the power of creating these AR experiences in Lens Studio and deploying them through Spectacles,” Spiegel said. “And to me, that is the bridge to computing overlaid on the world.”

At launch, there was some not-so-subtle skepticism and…shade being thrown around in reviews about the market appetite for $380 camera glasses.

“Spectacles 3 is a limited-production run. We’re not looking for massive sales here. We’re targeting people who are excited about these effects — creative storytellers,” said Matt Hanover of the Snap Lab team.

Let’s take a close look at the product itself and some content examples…

ID+UX design details

As you’d expect at this price point, the styling of the specs is more elevated and serious than the previous versions. Reminiscent of a time-traveling airship pilot trying to blend in on Abbot Kinney, or maybe Ruby Rhod sporting a vintage Issey Miyake mod, the glasses are an eerie cocktail of voyeurism, fashion, and technofetishism.

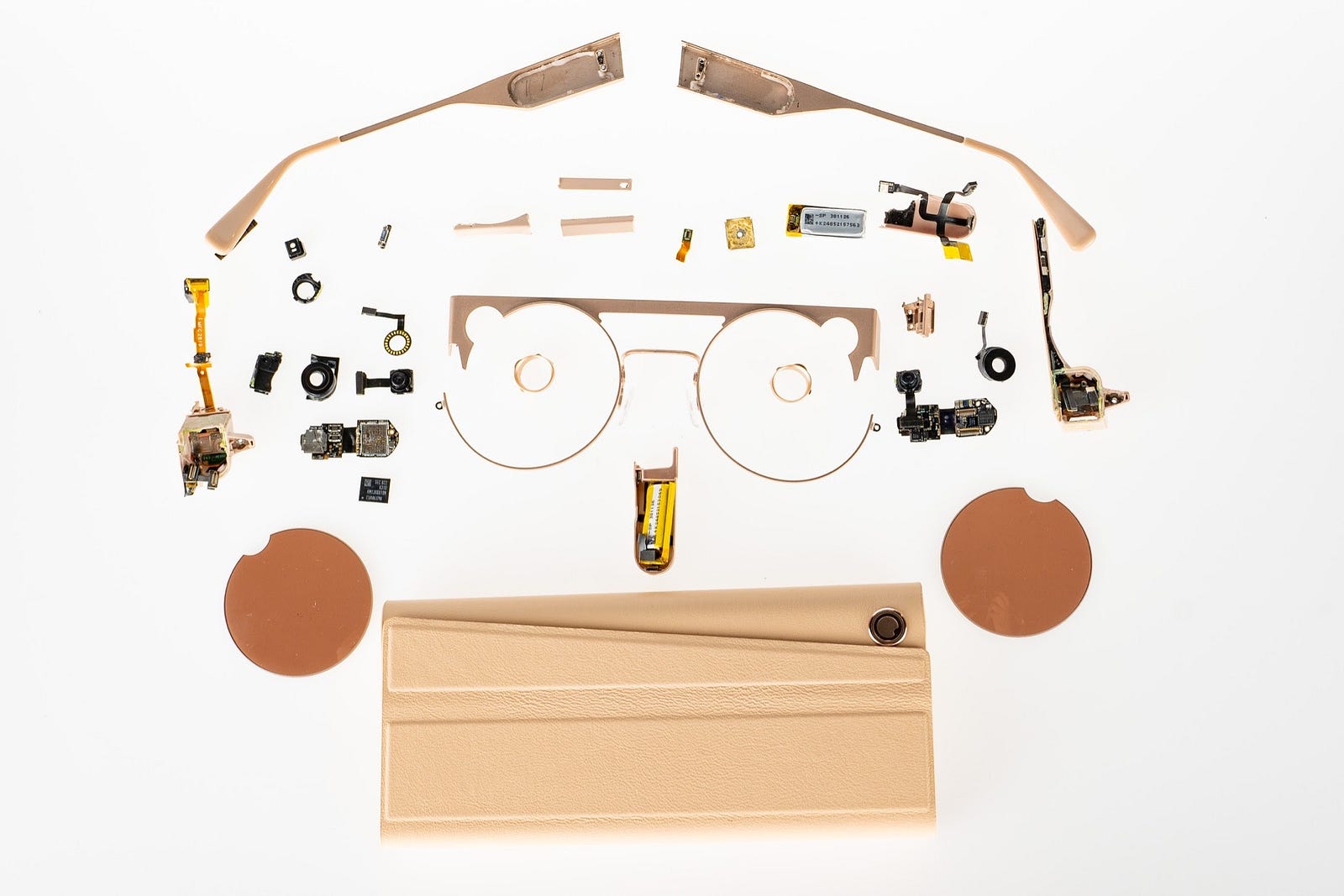

The product has several mixed materials coming together: steel frames, lenses, injection molded plastic, cameras, screws, batteries, circuit boards, and hinges. The fit and finish is quite elegant and well controlled.

In addition to a charging case, a USB-C cable, and a microfiber cloth (to dry the polarized lenses or your eyes from the price tag), the product also comes with a Google Cardboard-style phone viewer, so you can rewatch your memories in full stereoscopic effect.

The collapsable charging case is nicely executed in leather with beautiful little touches like a charging LED animation.

The frames have contacts on the bridge that snap in with magnets automatically when you stash the shades. This charging paradigm for portable/wearable devices has proven to be a clear winner, and the first gen spectacles deserve credit for being on to this modality from day one. The case carries 4+ full charges of the frame’s battery.

The details of the user interface are well considered for both the wearer and the people on-camera. The device has a button near the hinge on each temple allowing you to start shooting a video quickly and thoughtlessly with either hand.

There’s an LED inside the frames to provide feedback to the wearer that video is rolling, and an animated ring for people in front of the camera to know when they’re being recorded or not.

These design details point to a company thinking long-term about the interaction paradigm for this class of device, not rushing out an MVP. Snap is in this for the long haul.

The guts

You see a similar story told by the internals of the device. It’s amazing to look at the specs for the product and see how they fit everything in such a tight form factor.

The spec sheet:

- Photos: 1728 x 1728 px

- Video recording: 1216 x 1216 px at 60 fps

- Wide field of View: 105° 2D, 86° 3D

- F-stop: f 2.2

- 70 videos (capture + sync) per full charge

- Four built-in microphones for noise reduction / beam forming

- Bluetooth 5.0

- 802.11 ac Wi-Fi, 2.45 / 5 GHz

- Built-in GPS and GLONASS

- 4GB flash storage

- Prescription lenses w 100% UV protection

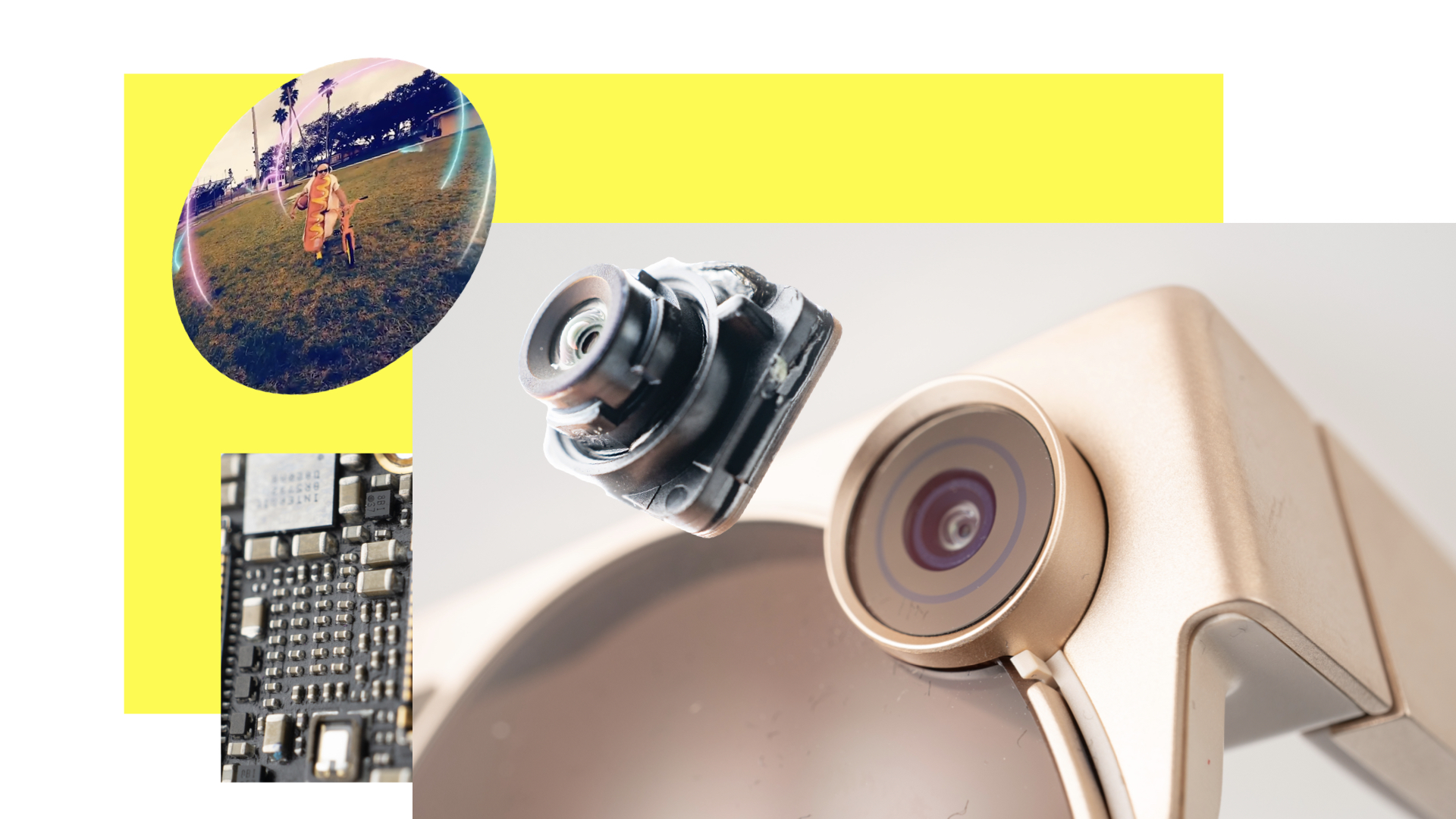

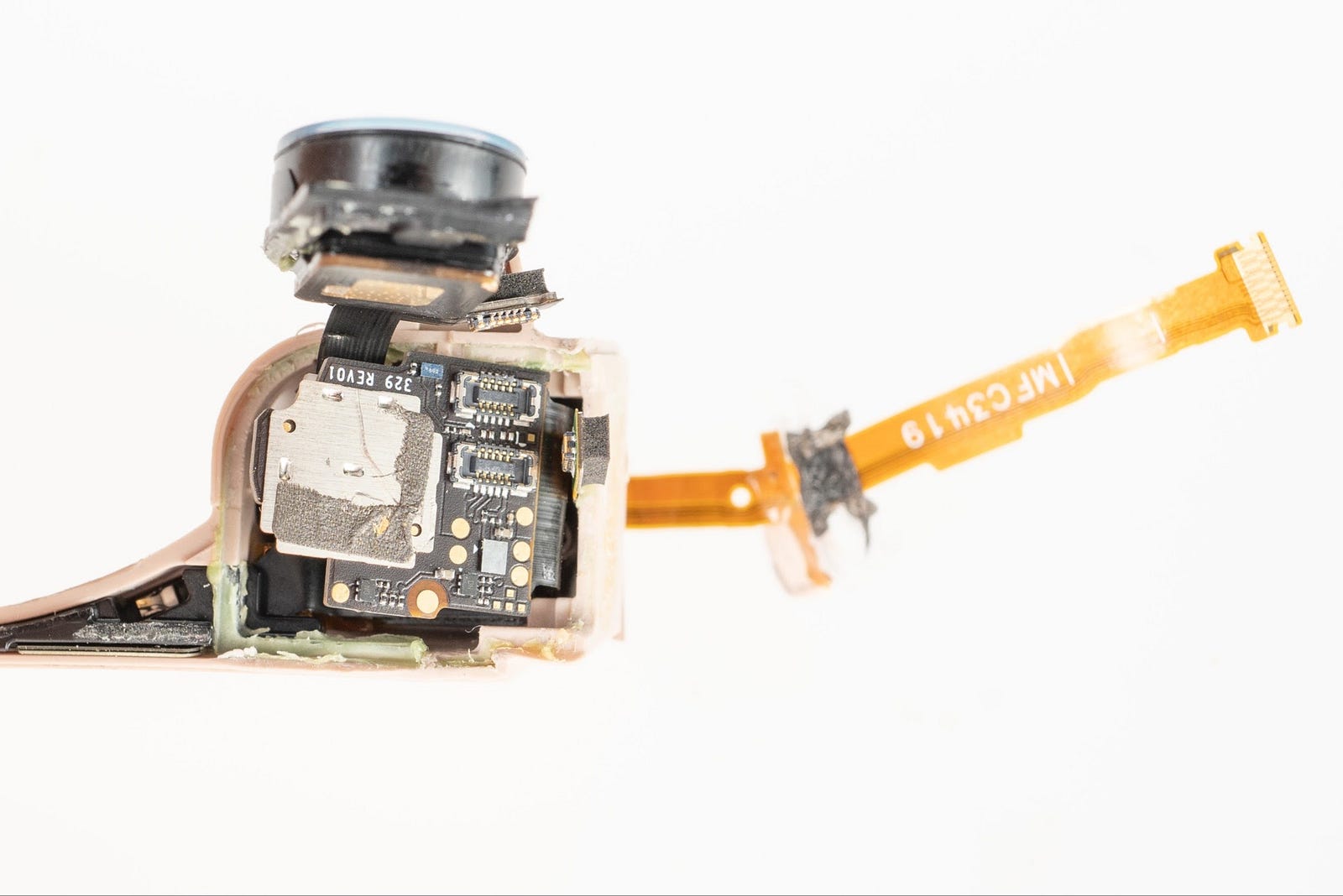

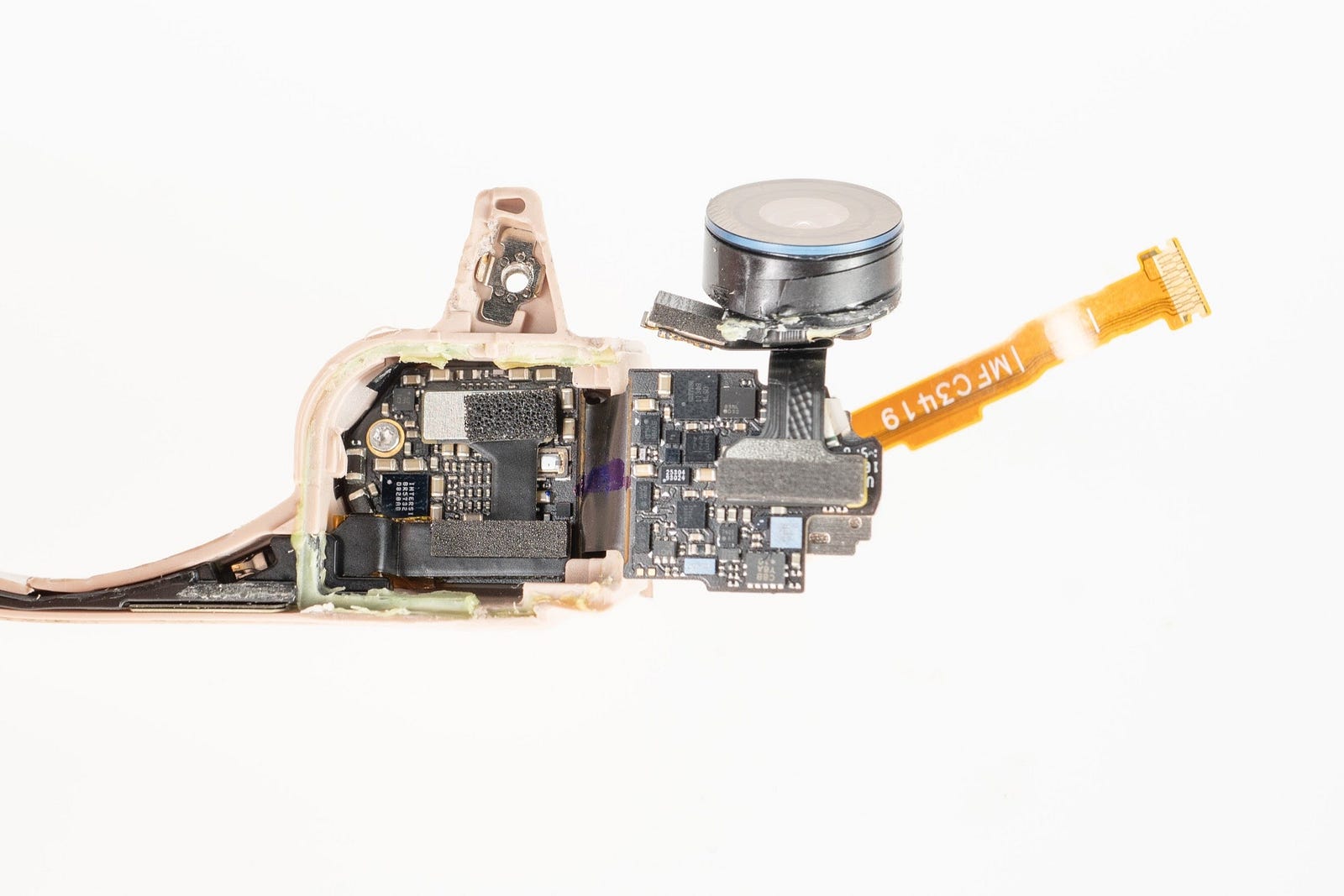

To the dismay of circular design advocates everywhere, tightly integrated wearable products these days are often a messy composite of tape and glue. These Spectacles are no different. You’ll see from the photos that this teardown is more a hatchet job than delicate surgery.

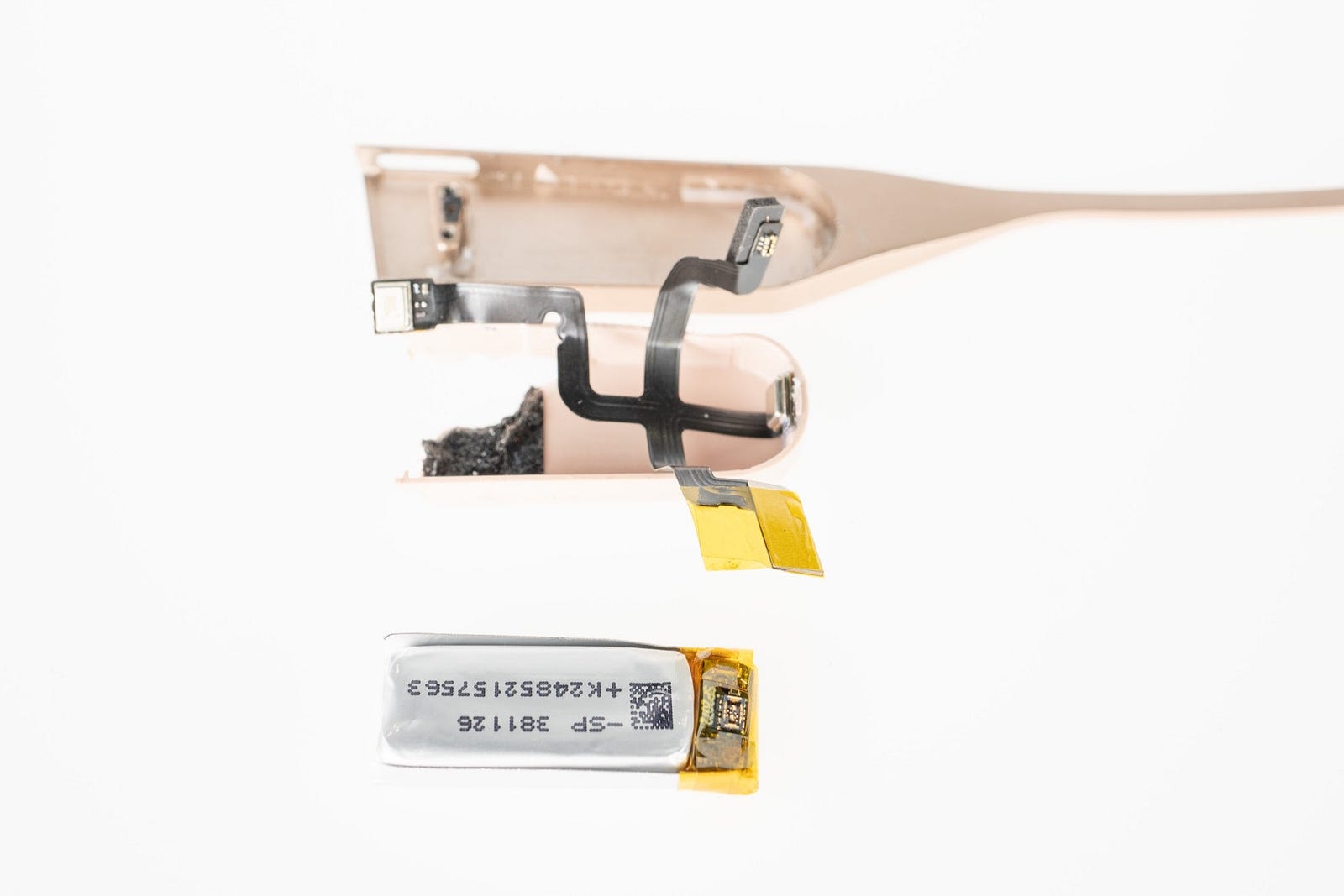

We started by removing the lenses and breaking into the hinge areas on each side. You can see volumes sealed with injection-molded parts on both legs of the glasses. These areas have a four-armed flexible circuit board connecting a battery, the button, and two bottom-ported microphones on each side. You’ll notice the flexes have to pass through the hinges to connect to the bridge of the glasses.

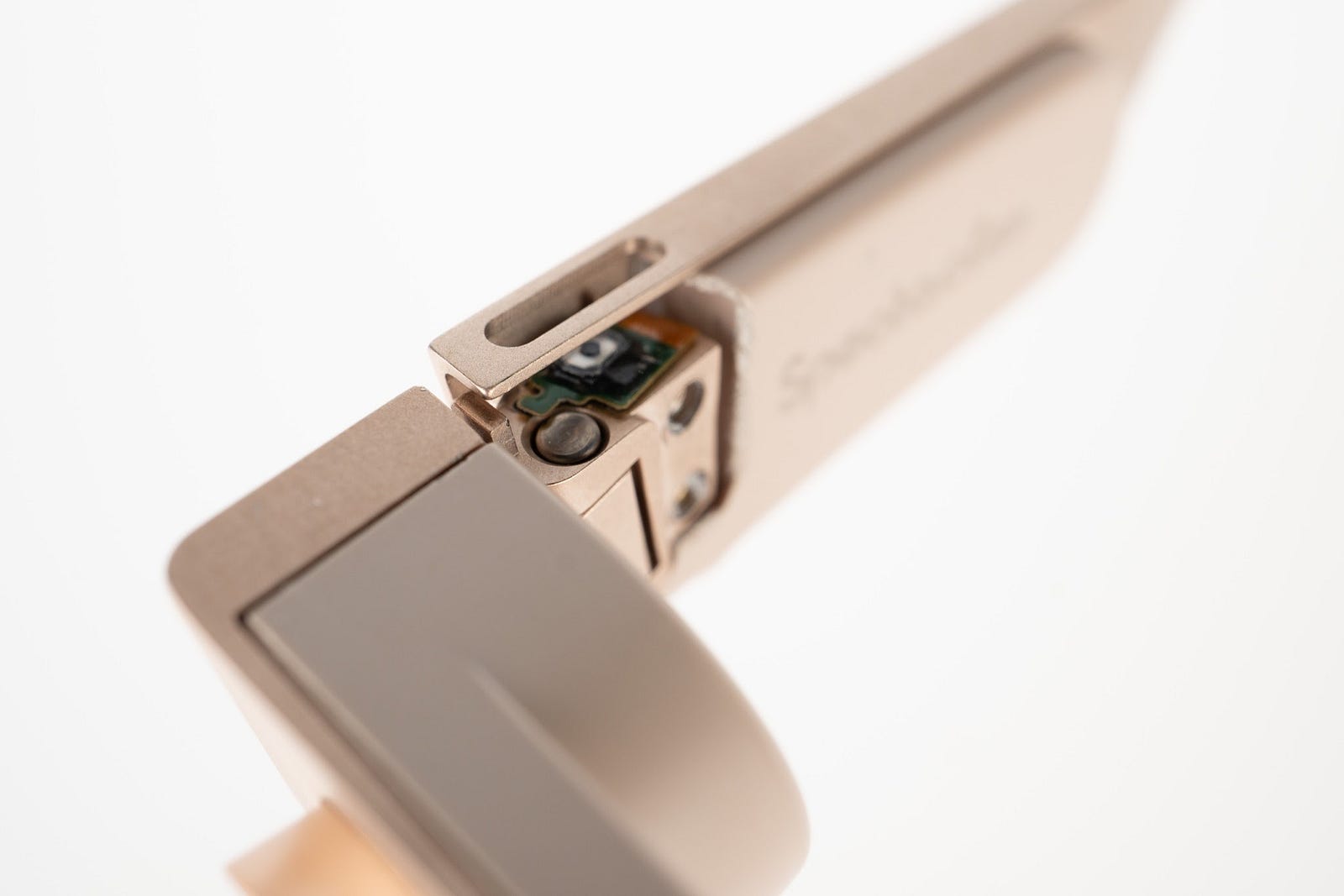

Moving back to the middle bridge section, we removed the front metal enclosure and lens holder to reveal the two camera areas with another flex interconnect passing through the bridge.

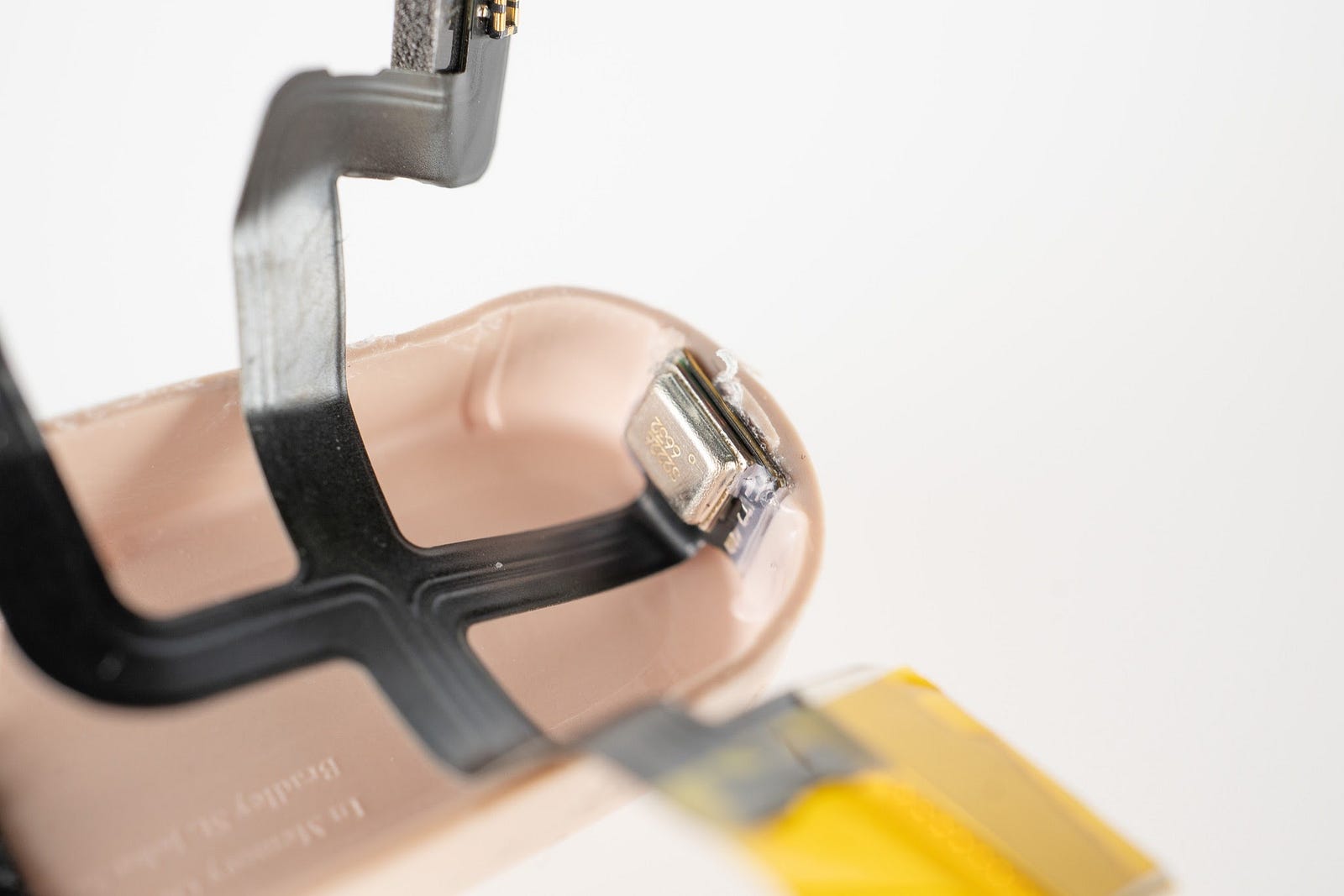

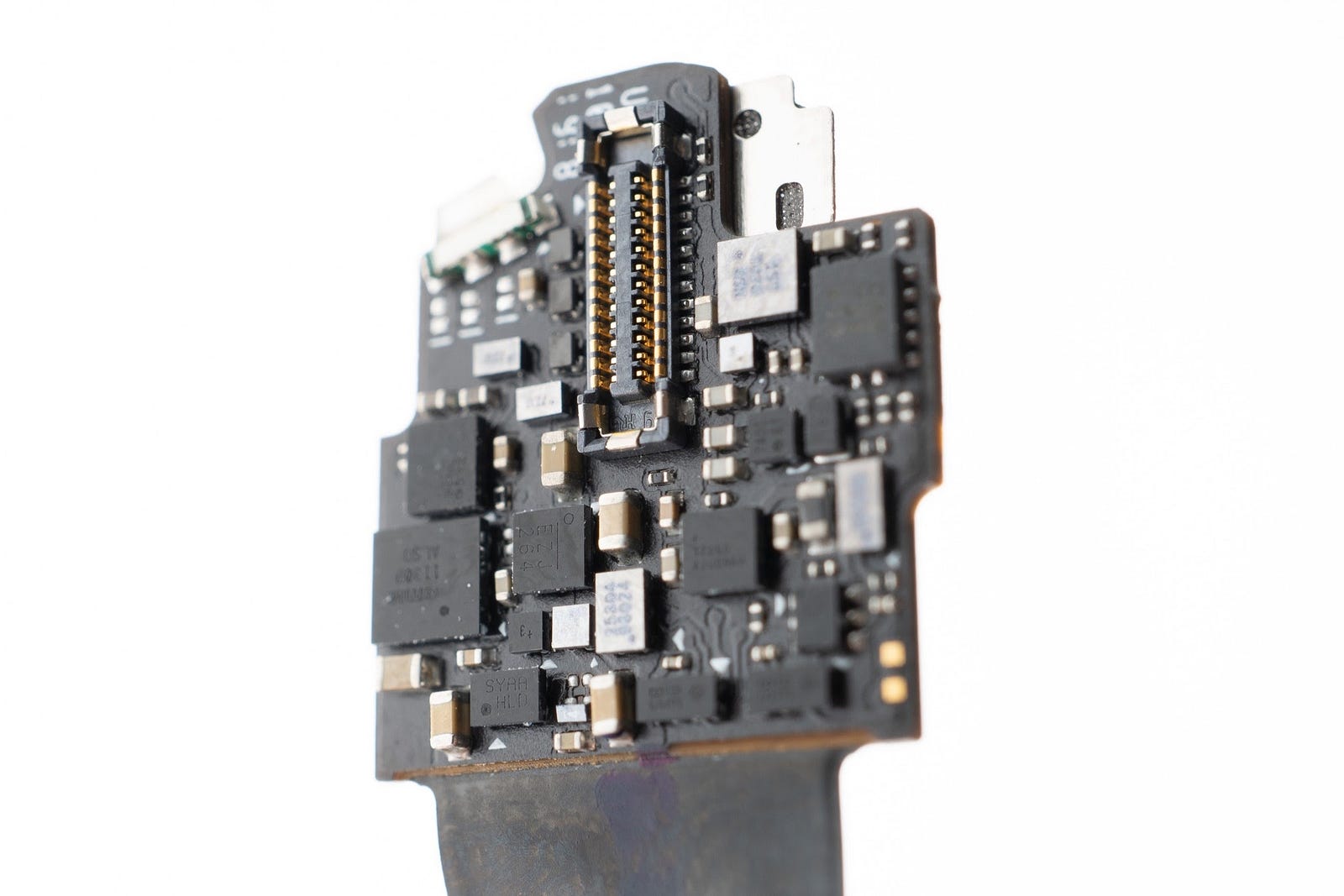

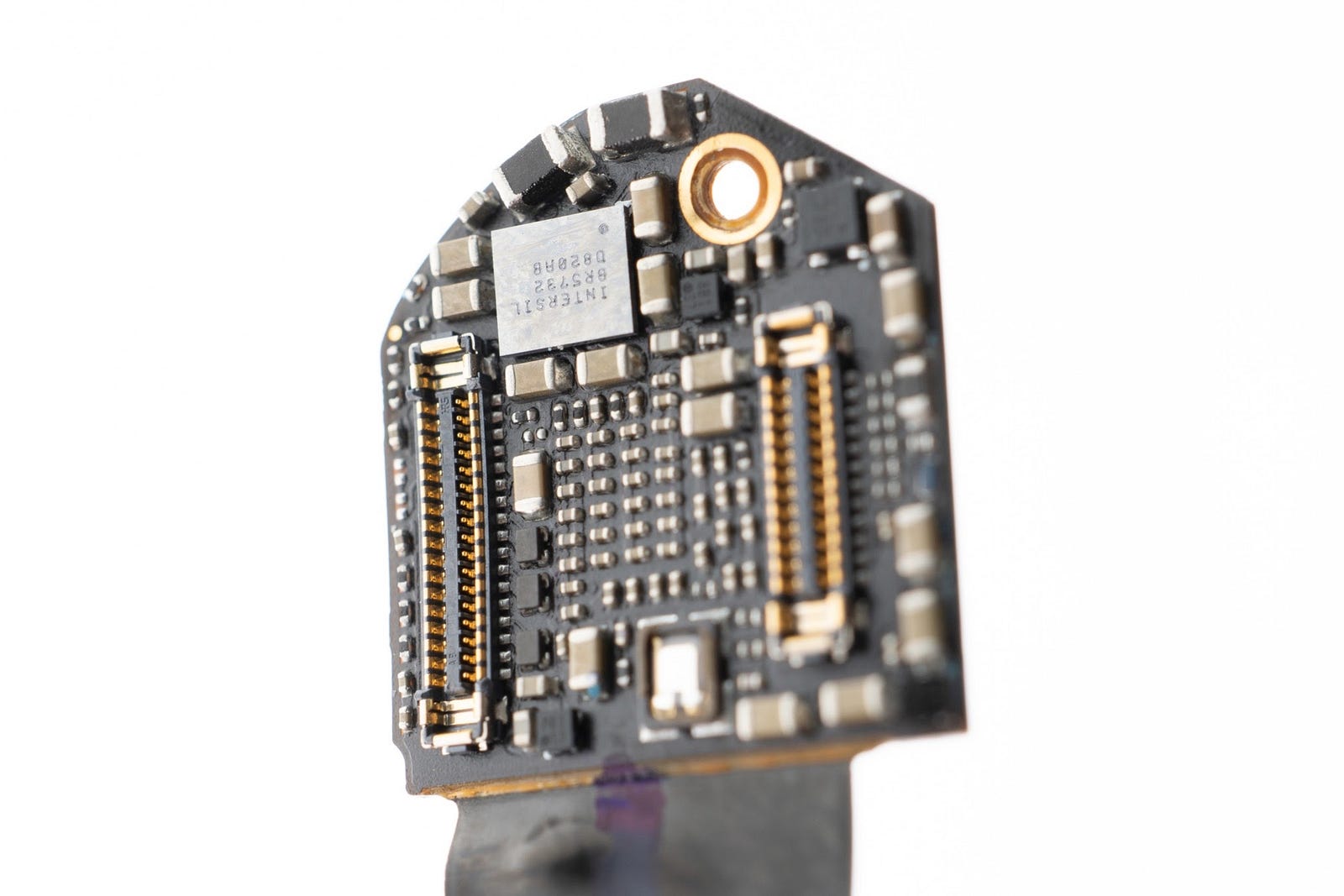

The areas behind each camera are impressively packed by origami-folding a rigid flex board multiple times to layer components into the tight volume. Take a look at a step-by-step unfolding…

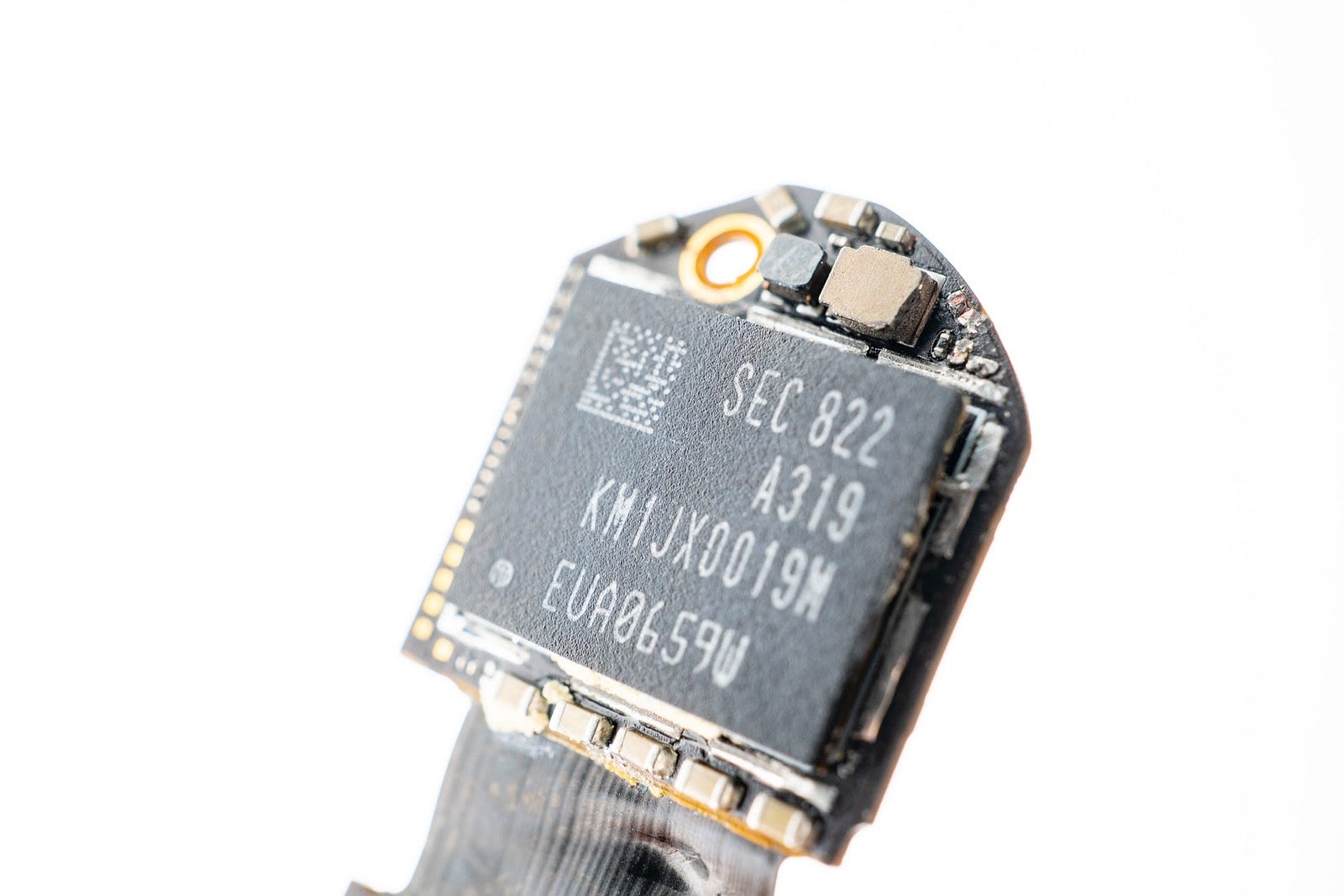

The components on each of the boards are very tightly placed and routed. You can see how the planform area behind the camera is basically set by the size of the NAND flash chip.

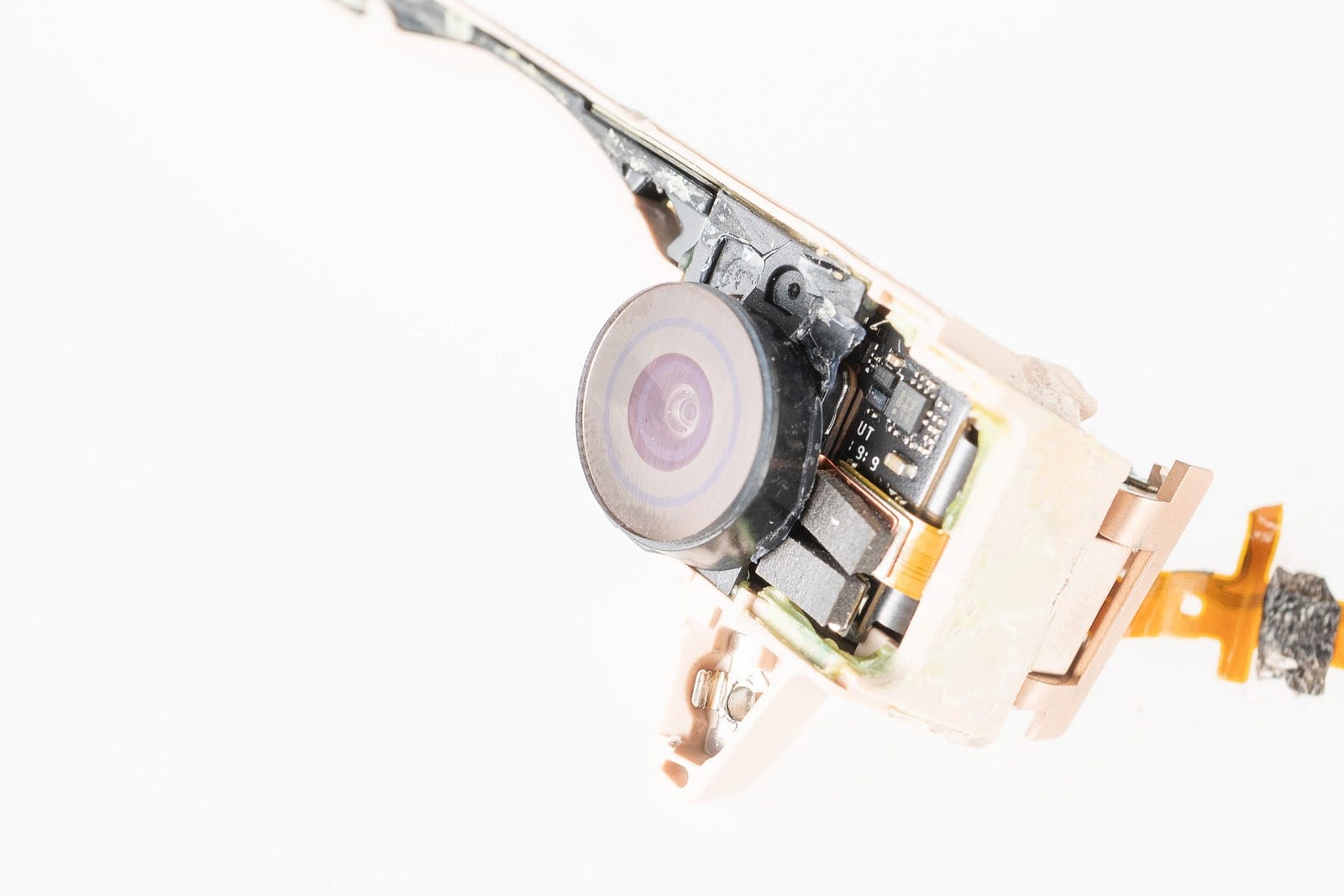

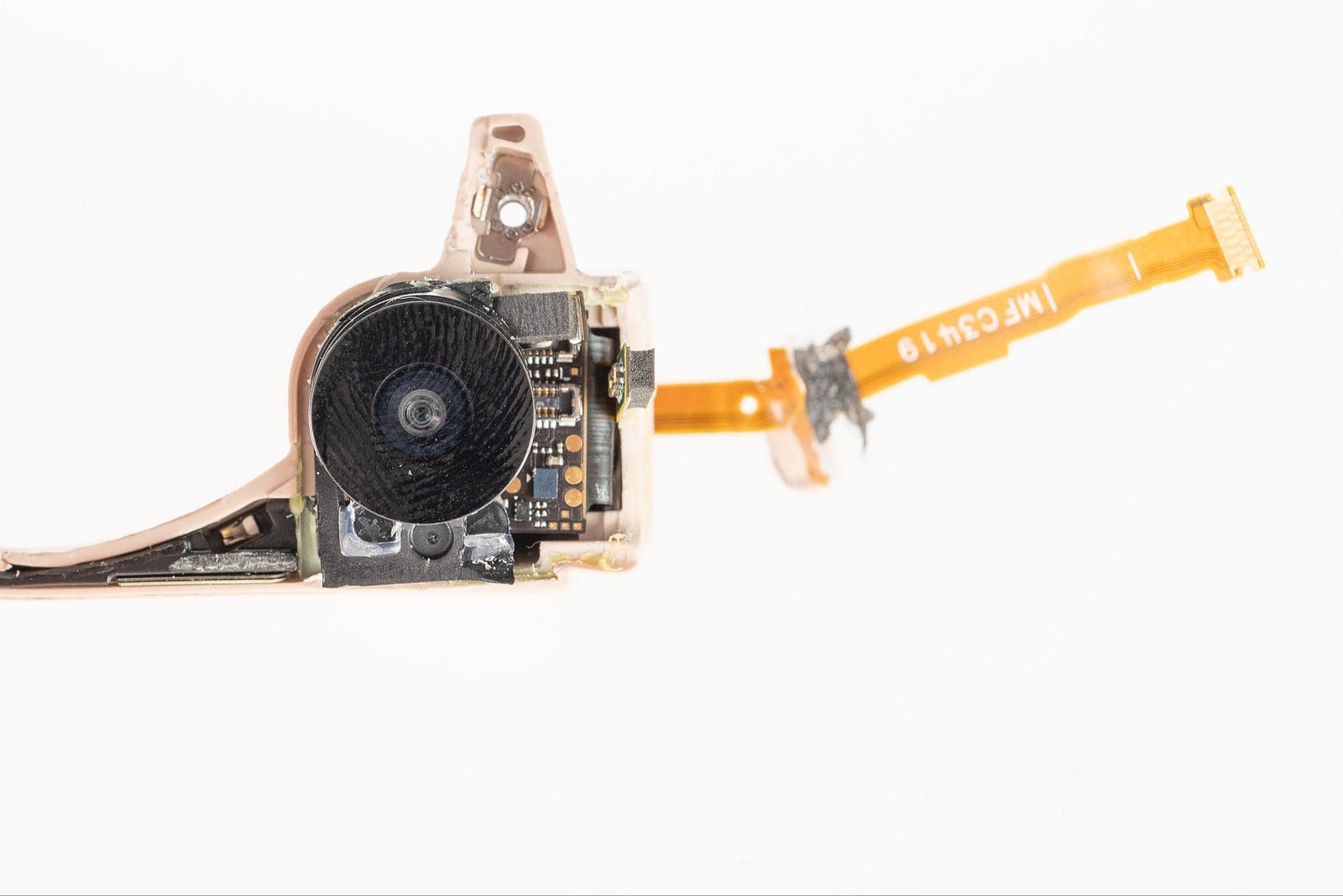

Moving on to the cameras themselves, you’ll see a relatively standard (but tiny) camera module implementation: a rigid flex substrate, the sensor wirebonded out, a plastic housing + lens holder, and a lens barrel screwed into focus.

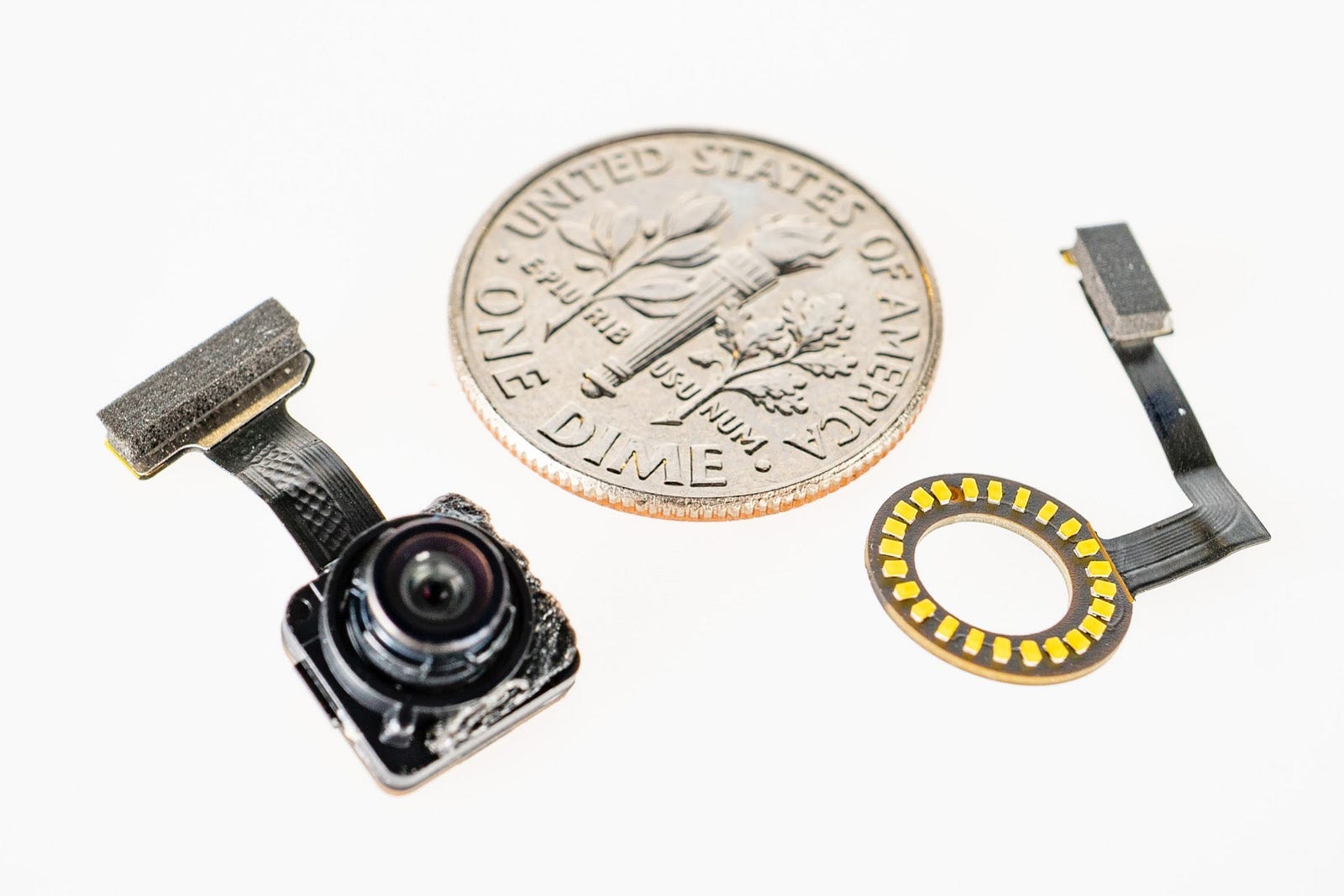

Surrounding the camera is the LED ring that produces the spinning recording indicator we talked about earlier. To me, this one component best illustrates how seriously Snap is taking this product architecture and how committed they were to the specific UI design. It’s a tiny rigid flex (!) with surface mount components on both sides (!!), including 24 (!!!) LEDs. Another company would have just put one LED and called it a day or maybe none at all. This is quite a complex and expensive path to choose.

Here’s a dime as reference for how small this all is. You can easily lose your sense of scale in the photos.

That about does it for the teardown for now. There’s lots more here to dig into (board component + chipset choices, antenna architecture)…if you’re interested in going deeper, swing by the Teardown Library in SF sometime, it’s here to check out.

Here’s the full product knolled out:

Looking back on the history of Snap Spectacles from v1 to v3, it’s clear that Snap is committed to a long term vision of wearable computing. In Part 2 of this series, we will dive into the significance of gen 3 and 4 as dev platforms, and explore the way artists and creators are experimenting with this new medium. Read on!

Special thanks again to Haje for collaborating on all the teardown photography 👯

(This two-part series is a crosspost between the Bolt blog and the Teardown Library)

Bolt invests at the intersection of the digital and physical world.